Start here: how our SEO split tests work

If you aren't familiar with the fundamentals of how we run controlled SEO experiments that form the basis of all our case studies, then you might find it useful to start by reading the explanation at the end of this article before digesting the details of the case study below. If you'd like to get a new case study by email every two weeks, just enter your email address here.

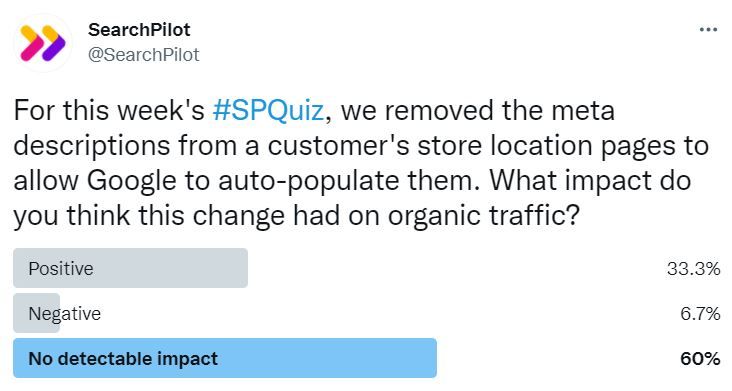

In our most recent #SPQuiz, we asked our followers what they thought would happen to organic traffic if we removed meta descriptions to allow Google to populate them. Here’s what they thought:

A majority of voters were convinced this would have no detectable impact on organic traffic, while a third thought it would have a positive one. Turns out the majority were right, but you might be surprised to see that this was positive at a lower confidence level.

The Case Study

For the last couple of weeks, the SEO world has been obsessed by the recent change to how Google decides the title of snippets within search results. As of August 2021, Google is using a new system to generate the text for the links, going beyond the HTML title tag in a more widespread way.

In Google’s statement they actually claim to have been looking beyond the title tag since 2012, and we’ve been accustomed to Google having a mind of its own about another area of the search results snippet for a long time - the meta description. According to a Portent study, Google rewrites 70% of meta descriptions when forming the text within the snippet. Now that it seems title tags are headed in the same direction (rewrites are at 58% according to Moz), we thought it would be a good time to share a case study about what happens when you leave Google to it in setting the metadata. If you’re tempted to leave your title tags blank and let Google get on with it, here are some learnings from meta descriptions that might be applicable (bring your own pinch of salt).

A customer had a website with hundreds of pages relating to physical storefronts across the USA. These pages previously had generic meta descriptions without a lot of unique information relating to each page. The customer did not have the resources at that time to do custom rewrites for all of the meta descriptions, and in any case did not know what to put in the new description for the best impact. For these reasons, they decided to delete the meta descriptions altogether, and allow Google to pick a snippet from the text on the page.

| Control | Variant |

|---|---|

|

|

| Control |

|---|

|

| Variant |

|

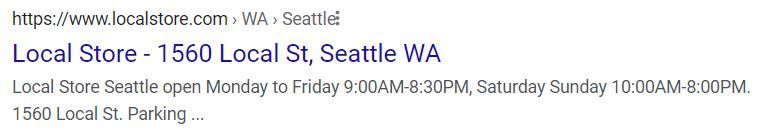

After removing the meta description from the page, Google pulled in information specific to each location into the snippet, including opening hours, address and parking information. The snippets weren’t always grammatically correct, and often the information was slightly garbled, but it gave an indication of what kind of information Google thought would be useful for users.

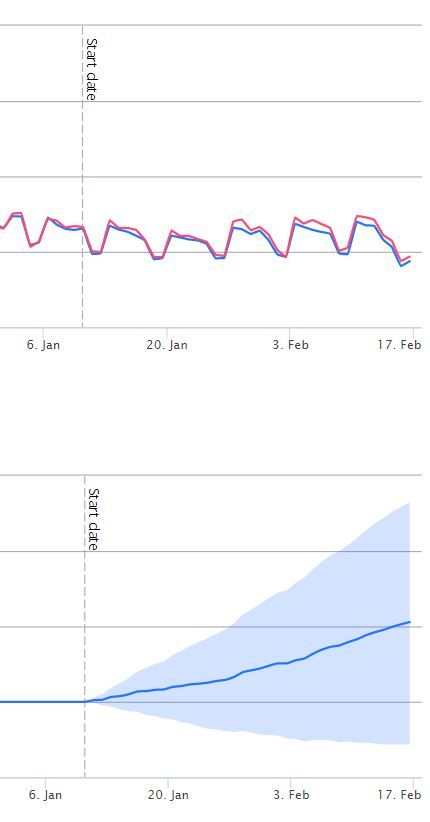

We ran this as an SEO split test, and the results were as follow:

The result was inconclusive at the standard 95% confidence level. The result is, however, positive with 80% confidence. This means that there is a 20% (1 in 5) chance of a change with no real impact resulting in test results that look like this. That’s not enough to say with confidence that there is an effect, but it’s enough to encourage more investigation.

This test also allowed the customer to gain valuable insight into what kind of information is perceived as useful for Google, which informed their future efforts to write bespoke meta descriptions for these pages.

Applying this to the current question about Google’s approach to title tags, while leaving the title blank may not be a winner in terms of traffic, it may be a good way to understand what Google expects to see in titles, and potentially give you ideas for what to include.

How our SEO split tests work

The most important thing to know is that our case studies are based on controlled experiments with control and variant pages:

- By detecting changes in performance of the variant pages compared to the control, we know that the measured effect was not caused by seasonality, sitewide changes, Google algorithm updates, competitor changes, or any other external impact.

- The statistical analysis compares the actual outcome to a forecast, and comes with a confidence interval so we know how certain we are the effect is real.

- We measure the impact on organic traffic in order to capture changes to rankings and/or changes to clickthrough rate (more here).

Read more about how SEO testing works or get a demo of the SearchPilot platform.