-1-2.jpg)

Let’s start by going back to 1998, back before anyone knew what Google was and the likes of Tom Anthony and Will Critchlow had just begun to build websites from their bedrooms. It was important to repeat your keywords keywords keywords across the site so that AltaVista & co. would know your keywords were very important, as keywords were how you ranked. You wanted to make sure your keywords were in your title, and that your keywords were in H1 tags, and that your keywords were just about everywhere else. (Ok, I can’t keep this up any longer, but you get the point).

Joking aside, title tags and H1 tags have always been at the centre of SEO - no good site audit doesn’t mention them. However, as things have evolved and we’ve had PageRank, TF-IDF, Penguin and RankBrain, it is tempting to wonder if optimising these things makes much of a difference anymore. Surely getting close is good enough - Google should work it out with all their deep learning expertise, right? At Distilled [and now at SearchPilot, Ed.] we have seen that on large pages, getting these things right really does seem to make a difference. Yet, it has always been hard to prove.

However, we’ve been piloting our SEO split-testing platform and one of the tests we’ve run focussed on exactly this type of change. This post demonstrates how improving title tags and H1s can make an appreciable difference.

SEO Split-Testing allows you to test small changes

As I mentioned, testing this sort of on-page change has historically been quite difficult, as it is hard to control for other variables. For example, what happens if Google rolls out an algorithm update near the same time you make your change? How are your other changes affecting this test? What are competitors doing that affects the SERPs?

Using an SEO split-testing approach allows you to control for all of these factors, and thus determine a link between the change you want to test and any impact it has. Will wrote a great post on SEO split-testing and Pinterest wrote a very interesting post about their SEO split-testing work.

SEO split-testing is hugely powerful for a whole variety of reasons. However, the important aspect of this post is that you can test small changes that may have a business impact, but which are hard to detect through the noise when you don’t have a system in place. However, aggregating a few of these small changes can make a big difference in putting you above your competitors and driving more traffic to your site.

The test I’m showing here was put together by myself and our Head of R&D Tom Anthony, and was run using SearchPilot.

The Test Setup

The experiment I ran was on Concert Hotels (www.concerthotels.com), which has ~20,000 location category pages, each which list a number of hotels near that location. I ran a 50/50 split test, meaning we randomly chose half of these location pages and made the below changes. We left the other half as they were. With some setup, you can also run uneven splits (e.g 10% change, 90% control) allowing ‘taste-testing’ changes on smaller sets of pages.

I rolled out a change to ~10,000 pages, which made a change to both the title tags and H1 tags on those pages; they previously read:

Title: <<Location>> Hotels, NY | ConcertHotels.com

H1: <<Location>> Hotels

There were changed to be:

Title: Hotels near <<Location>>, Rochester, NY | ConcertHotels.com

H1: Hotels near <<Location>>

I made this fairly innocuous looking change based on a variety of keyword research I had done around venue search volume and user intent. Rather than focusing on individual keywords, I really focused my attention on keyword groups. I highly recommend reading Sam Nemzer’s post “Are Keywords Really Dead?” for our latest thinking on this topic. Across hundreds of locations our findings indicated:

The “Hotels near…” structure had over double the amount of searches per month.

| Search Query Structure | Overall Search Volume |

|---|---|

| Hotels near «Location» | 156,510 |

| «Location» Hotels | 72,300 |

Users often refined their search by including either the city, state or both within their search query, hence I should focus my attention on improving the long tail opportunity for these pages.

E.g.

- Hotels near «Location», New York

- Hotels near «Location» Arizona

The Results

We rolled out the change and started rampantly refreshing the Google Analytics dashboards. but nothing happened, of course. It takes Google a bit of time to recrawl the pages and to start acting upon the changes.

After a few days we started to see what looked like a difference in the traffic levels between our control group and our variant group. It is hard at a glance to know whether the changes were real or in our heads.

I won’t dig into the details of the maths here (see Will’s post above), suffice to say that having a framework for gathering and analysing the data makes all the difference.

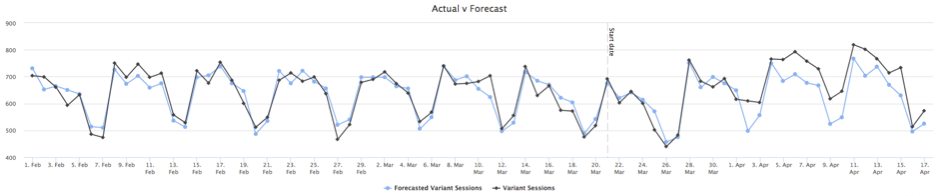

The first graph we saw in the SearchPilot dashboard look like this:

The black line in the graph above represents traffic to our variant group (the group of pages with our title tag and header changes). The blue line is a model that uses historical data to forecast the traffic we would expect to see to the variant group. You can start to see these lines diverge around the 1st of April. This is telling us that traffic to the variant group is performing better than our model, showing the test is a success.

The model is also based on the control pages. So, if something else changes during our test (for example, a Google algorithm update), then the model would be able to detect and account for that.

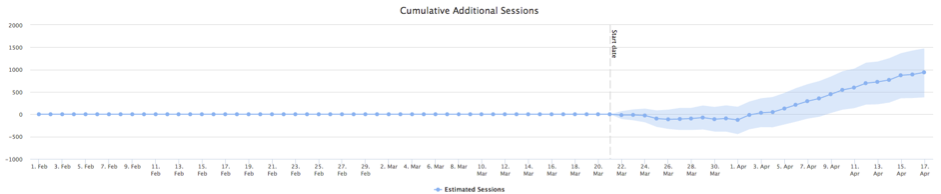

This graph is showing the cumulative effect of the increase in traffic vs. our model. The shaded blue area represents a 95% confidence interval. You can see that on the 9th April we became 95% confident that the results we are seeing are not caused by chance.

Wrap Up

It seems that Google still relies on us setting well optimised H1 and Title tags, and that even small, data-driven and well researched, changes to these can make a difference to your organic search performance.

A good place to do research is using Moz’s new keyword explorer tool, Google Adwords (if you already have this data), and Google Keyword Planner.

.png)