Start here: how our SEO split tests work

If you aren't familiar with the fundamentals of how we run controlled SEO experiments that form the basis of all our case studies, then you might find it useful to start by reading the explanation at the end of this article before digesting the details of the case study below. If you'd like to get a new case study by email every two weeks, just enter your email address here.

Meta descriptions have long been one of SEO's most debated elements. They don't directly influence rankings, which Google has confirmed, but they remain a critical lever for click-through rates. A well-crafted meta description is essentially ad copy: It speaks directly to a searcher's intent, and when it lands, it can meaningfully increase the share of clicks your page earns from a given set of impressions.

The principles behind this test's hypothesis are a well-established tactic in paid search: using promotional messaging to drive higher click-through rates. The question this test asked is whether that same logic holds in organic search, where the rules of engagement can differ greatly.

The Case Study

For organic search, click-through rate optimization sits at an interesting intersection of SEO principles. Google uses CTR as one signal among many when evaluating how well a result is serving its users. A page that earns more clicks than expected for its ranking position may benefit from a rankings boost over time; this means CTR improvements can compound into traffic gains beyond just the direct click lift.

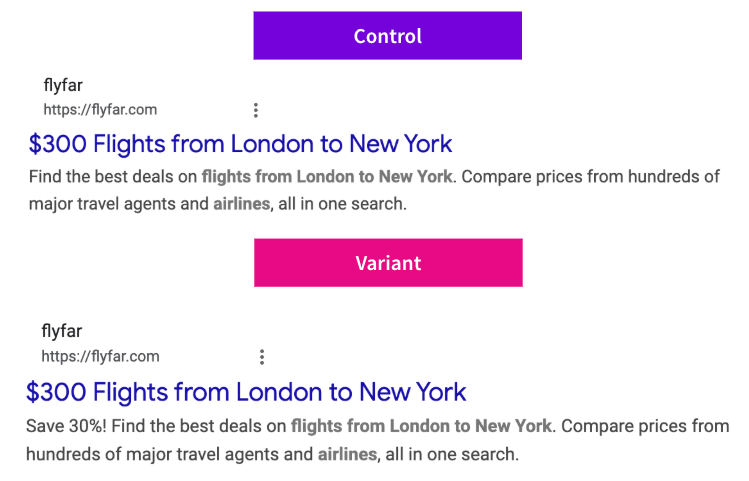

In today's case study, we are looking at a test one of our travel customers ran where they tested adding "Save 30%" to their meta descriptions. The hypothesis was that surfacing this kind of value proposition in the meta description would increase CTR on flight search results pages, driving more organic sessions.

But would it work equally everywhere?

This test was run as a global experiment, meaning it was deployed simultaneously across two separate country domains, India (IN) and the UK (UK), in order to measure impact in each market independently. Running tests globally is valuable precisely because it allows teams to understand whether a change behaves universally or whether market-specific factors such as search behavior, competitive landscape, user price sensitivity, or how Google renders results in different regions can cause the same change to land differently in different places.

What Was Changed

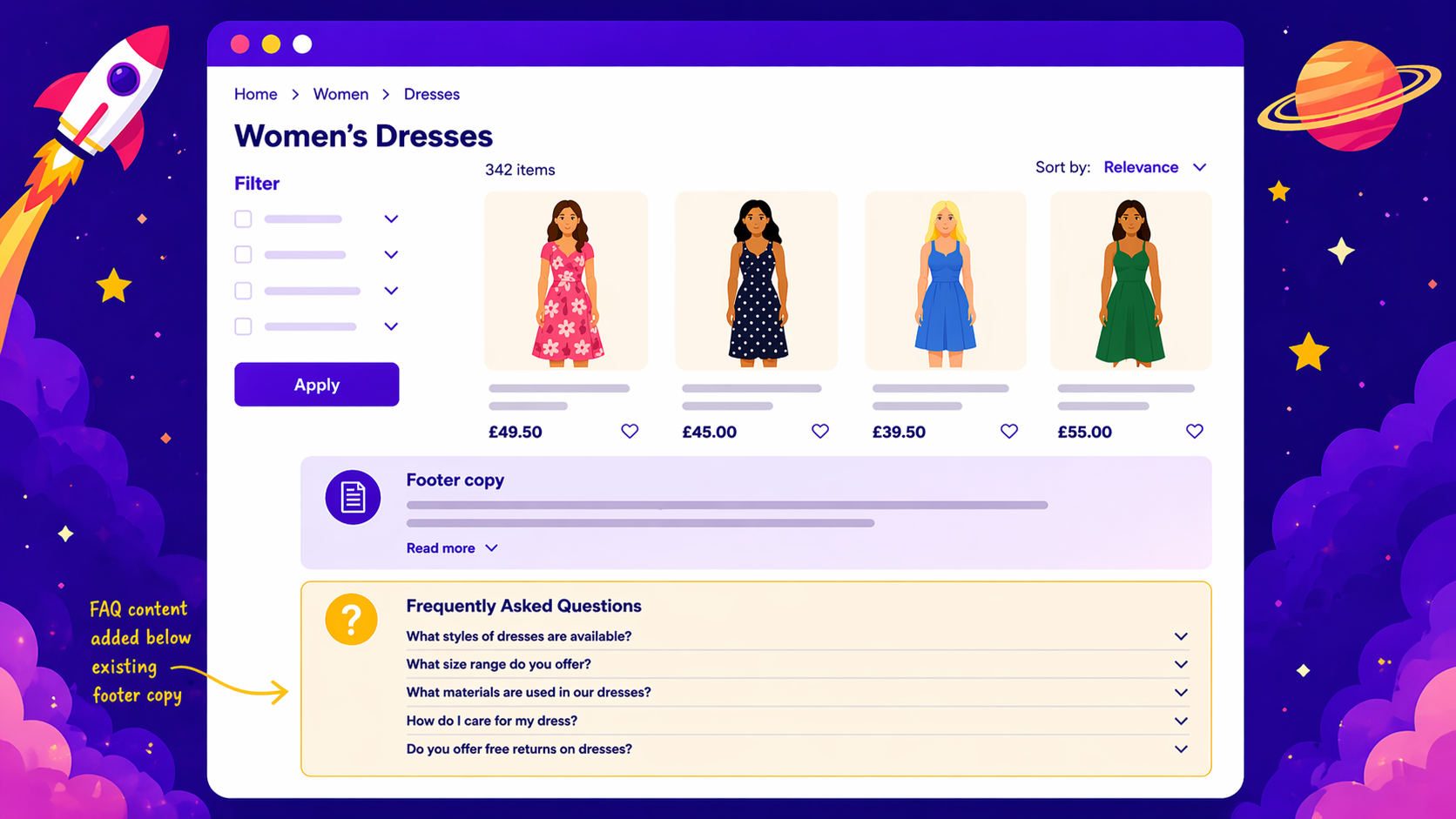

The change itself was straightforward. The meta descriptions on flights pages were updated to include the phrase "Save 30%" as a prominent piece of CTA messaging within the description text.

Results

India

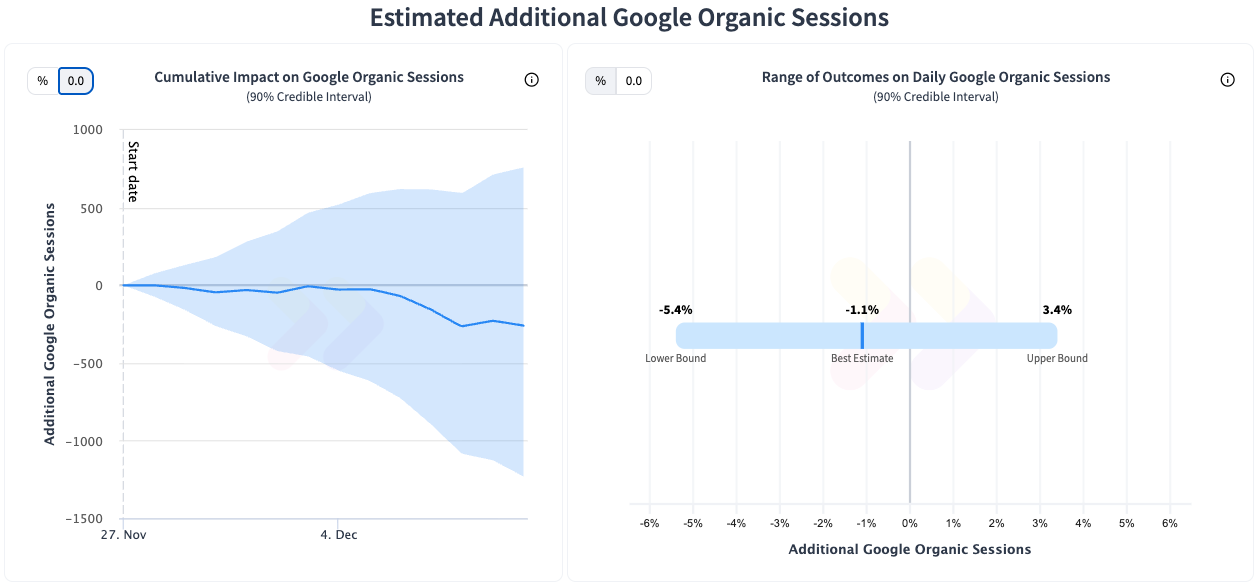

UK

The results reinforced our understanding that SEO changes do not have the same impact across markets.

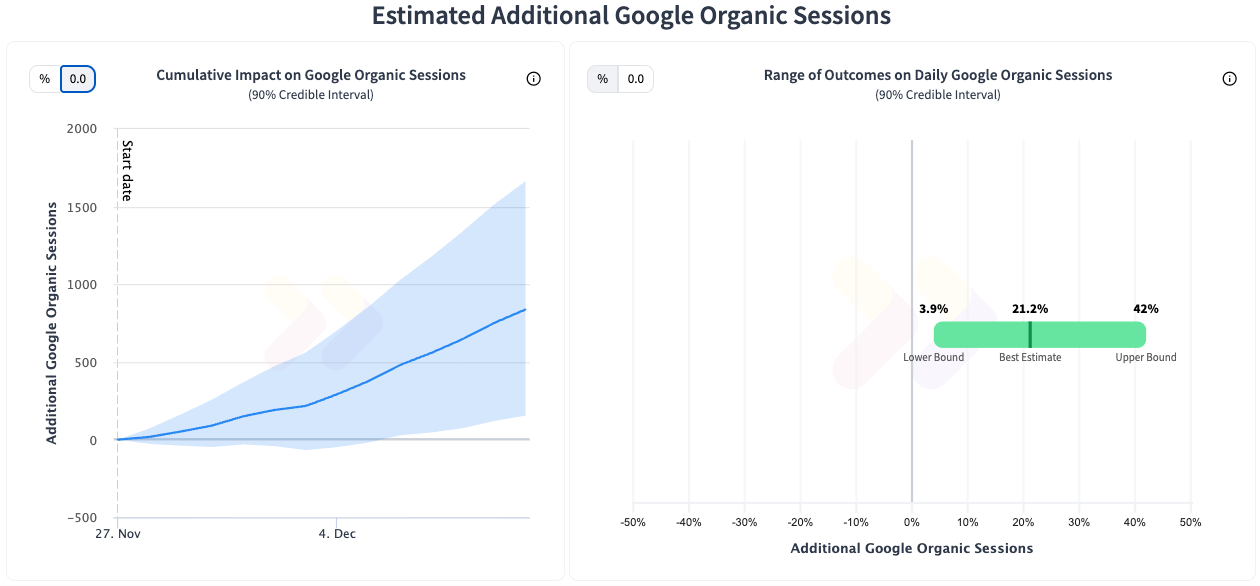

In the India market, the test returned a statistically significant positive result with a +21.2% increase in organic sessions. In the UK market however, the test failed to produce a statistically significant result.

Why might the same change produce such different outcomes?

This divergence is arguably more interesting than either result in isolation, and it's the kind of insight that only a multi-market test can surface. This is why we have been seeing a trend among our global customers towards multi-market testing as they have seen the value in understanding how the markets can differ.

This testing has shown them that they now have the tools to be much more nuanced in how and where they implement SEO changes.

As always, we recommend testing these kinds of changes in your own context. What resonates with one audience may not resonate with another, and promotional language in particular has a way of behaving unpredictably across different markets and user bases.

To receive more insights from our testing, sign up for our case study mailing list, and please feel free to get in touch if you want to learn more about this test or our split testing platform more generally.

How our SEO split tests work

The most important thing to know is that our case studies are based on controlled experiments with control and variant pages:

- By detecting changes in performance of the variant pages compared to the control, we know that the measured effect was not caused by seasonality, sitewide changes, Google algorithm updates, competitor changes, or any other external impact.

- The statistical analysis compares the actual outcome to a forecast, and comes with a confidence interval so we know how certain we are the effect is real.

- We measure the impact on organic traffic in order to capture changes to rankings and/or changes to clickthrough rate (more here).

Read more about how SEO testing works or get a demo of the SearchPilot platform.