Start here: how our SEO split tests work

If you aren't familiar with the fundamentals of how we run controlled SEO experiments that form the basis of all our case studies, then you might find it useful to start by reading the explanation at the end of this article before digesting the details of the case study below. If you'd like to get a new case study by email every two weeks, just enter your email address here.

Title tags are one of the most visible SEO levers available to large websites. They directly influence how pages appear in search engine results pages (SERPs) and can shape both rankings and click-through behaviour. For enterprise sites with thousands of property pages, even small, systematic changes to title tag formatting can have meaningful effects at scale. One seemingly minor question is whether abbreviated state codes (e.g., "TX") or full state names (e.g., "Texas") better serve users and search engines in these snippets. In this week's case study, we’re reviewing an experiment that expanded abbreviated state codes to full state names in title tags to see if there’s any measurable impact on organic search performance.

The Case Study

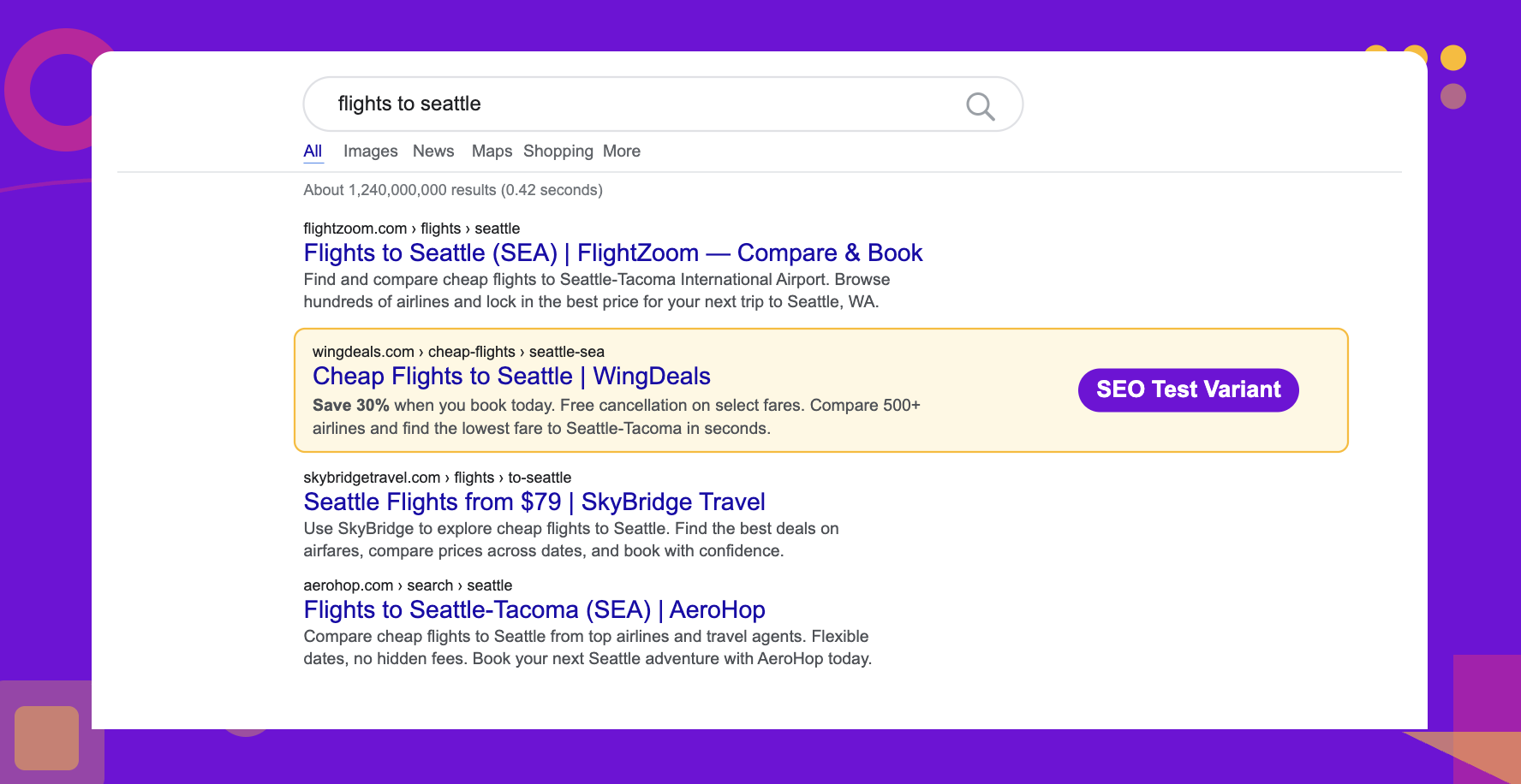

Enterprise websites typically generate title tags programmatically with structured templates applied consistently across tens of thousands of pages. A common pattern includes a brand name, city, and a state identifier — often rendered as an abbreviated state code to save on limited character count.

The rationale for expanding these abbreviations is that full state names are more descriptive, may better match how users phrase location-based searches, and could signal greater relevance to search engines evaluating keyword alignment. For our customer in the travel industry, they have pages that compete in dense, intent-driven SERPs. Even a marginal improvement in keyword relevance or perceived trustworthiness could translate to meaningful traffic gains.

To test this, we ran a controlled experiment on their pages across the domain, changing only the state identifier in the title tag.

What Was Changed

We expanded the abbreviated state code in the title tags of property pages to the full state name.

Results

Results

The test produced a negative result. We observed an estimated 4% decrease in organic clicks, with a 95% credible interval ranging from approximately -2,000 to -29,000 monthly clicks. The GA sessions metric was inconclusive at the 95% level, suggesting the change did not benefit performance across measurement approaches.

One possible reason for the decline is that expanding state names made title tags longer without adding meaningful keyword value. In competitive travel SERPs, where users scan results quickly, shorter and more scannable titles may perform better. Lengthening the title could also push other important terms — such as brand names or location keywords — out of the visible snippet area, reducing their impact on click-through decisions.

Another factor worth noting is that Google did not consistently adopt the new title tags. In some cases the full state names appeared as intended, but in others Google reverted to the original abbreviated versions or rewrote the title entirely. This inconsistency likely diluted the effect of the test.

It is also possible that Google already treats state abbreviations and their full-name equivalents as semantically equivalent for ranking purposes, meaning the change offered no additional keyword signal. The longer format may have just introduced a presentational disadvantage in the SERPs.

This result is a useful reminder that changes which appear to add clarity or keyword richness do not always translate into improved performance. Keyword changes carry the risk of misaligned search intent or shifting pages to compete for queries with high competition. In fast-moving, competitive verticals like travel, the visual dynamics of search snippets matter as much as the semantic content within them. Teams running similar experiments on geo-specific metadata should consider testing scannability and title length as variables in their own right.

To receive more insights from our testing, sign up for our case study mailing list, and please feel free to get in touch if you want to learn more about this test or our split testing platform more generally.

How our SEO split tests work

The most important thing to know is that our case studies are based on controlled experiments with control and variant pages:

- By detecting changes in performance of the variant pages compared to the control, we know that the measured effect was not caused by seasonality, sitewide changes, Google algorithm updates, competitor changes, or any other external impact.

- The statistical analysis compares the actual outcome to a forecast, and comes with a confidence interval so we know how certain we are the effect is real.

- We measure the impact on organic traffic in order to capture changes to rankings and/or changes to clickthrough rate (more here).

Read more about how SEO testing works or get a demo of the SearchPilot platform.