Even before the rise of AI, we knew that zero-click searches were on the rise.

The growth of AI overviews and AI mode have continued the trend, and an increasing number of people are turning to their favourite AI tool to research the things they want to buy.

For some industries, this presents a real threat. One of the reasons we have chosen to focus so much on retail and ecommerce is that I'm excited about the future for retail: ChatGPT isn't going to ship you a new pair of shoes. The role of the manufacturer, brand, and retailer might change, but there is a role for all of them for the foreseeable future.

It's very important, though, to stare a specific reality in the face:

- Search volume is up

- Online retail is up

- Traffic might be flat or down

How can this be true?

The answer lies in the changing value of a click.

If there are fewer clicks and more revenue, those clicks must be worth more. Why might that be?

Why might a 2028 click be worth more than a 2023 click?

There are some very interesting academic papers about searcher journeys across many tabs and multiple sessions (see e.g. Stochastic Models for Tabbed Browsing [PDF]). The most intuitive explanation is that, at least for some searchers, at least in part, this journey is being replaced by research done by an AI tool or agent.

That means we still see the last click, but don't necessarily see all the research clicks along the way. Fewer clicks, same revenue.

What we'd expect to see, if these systems are better than searching for yourself, is even more revenue. More customers matched more closely with more things they'd like to buy? You'd expect to see them buy more. I don't think we are anywhere near the endgame here. I expect to see many more links in LLM-generated responses because I still think the hyperlink is the best user experience here.

But clicks could still be down.

Stop reporting on year over year organic traffic now!

I mean, hopefully you stopped when Rand told you to last year, but the next best time is now.

I've long argued that you can't just add up winning test results and expect them to sum to your year over year performance. There have always been macro effects, algorithm updates, and competitor activity, but this may be the biggest shift in consumer behaviour since people started buying things online.

Searching isn't going anywhere. Searchers aren't going anywhere. Search engines aren't even going anywhere. But if you fail to educate your organisation about these trends, you could find yourself in a situation where organic search is the biggest source of new customer acquisition, you have made a number of positive improvements, and the business is doing great, but a fixation on year over year traffic means your work is undervalued, or worse.

All of this technical innovation shouldn't obscure the fact that it's humans that want things, and optimising to be found when they go looking for those things is our job.

What about experiments designed to send more clicks?

I first wrote in 2018 about our reasons for using traffic as the primary success metric for most SEO tests. Even with everything I've written about here, that all remains true, and traffic is still the most common primary metric big retailers use to measure test outcomes:

- No amount of rank (or prompt!) tracking can cover the full universe of ways people search

- Many successful tests influence click through rate positively, and there's no other way to measure that

- Revenue can be an important guardrail metric but conversion data adds additional sources of noise and leads to under-powered tests

With regard to the changing value of a click, I don't worry about that for measuring individual tests because:

- Tests run for a few weeks at a time, and these shifts aren't substantial on that time horizon

- Any changes that are present affect both control and variant, and hence are controlled-for - if AI discovery makes clicks rarer or more valuable, that affects both groups during the test and the statistical analysis can take account of it without it affecting the ability to decide what to do

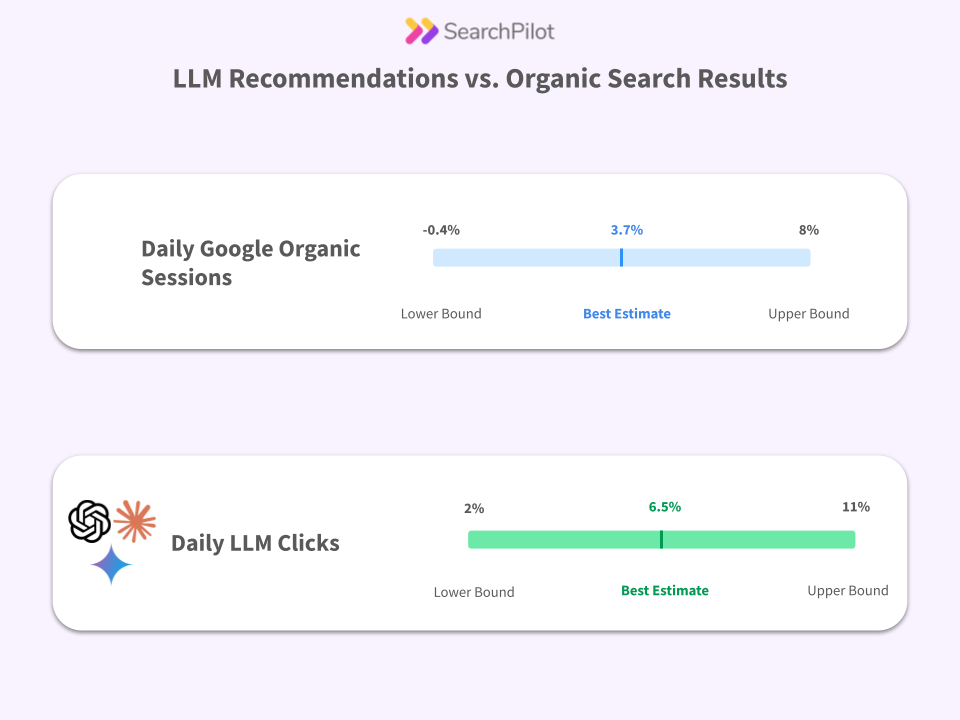

How should SEO testing evolve?

We're suggesting three primary improvements:

- Start considering LLM behaviour (including RAG) when designing hypotheses

- Consider a wider range of metrics, including guardrail metrics (we call this multi metrics analysis)

- Run GEO experiments directly

Summary

Do use clicks as a measure of the success of controlled SEO experiments.

Do not use year over year traffic trends as a measure of the success of a retail SEO program.

.png)