Start here: how our SEO split tests work

If you aren't familiar with the fundamentals of how we run controlled SEO experiments that form the basis of all our case studies, then you might find it useful to start by reading the explanation at the end of this article before digesting the details of the case study below. If you'd like to get a new case study by email every two weeks, just enter your email address here.

Meta descriptions sit at the intersection of search visibility and user behaviour. They shape how listings appear in search engine results pages (SERPs) and influence which result users choose to click. They do not directly affect rankings, but they can impact organic performance indirectly through click-through rate.

In competitive SERPs, even small changes (such as adding an emoji) can influence visual prominence, perceived relevance, and click-through behaviour.

In this week’s case study, we examine whether adding a relevant emoji to meta descriptions has any measurable impact on organic click-through rate and overall search performance.

The Case Study

Most large websites take a consistent, scalable approach to writing meta descriptions. Typically, they use concise, keyword-aligned copy that reinforces relevance and highlights key information.

This standardisation improves clarity and operational efficiency. However, it also means many listings in the SERPs look structurally similar. On crowded results pages, that uniformity can make it harder for individual listings to stand out.

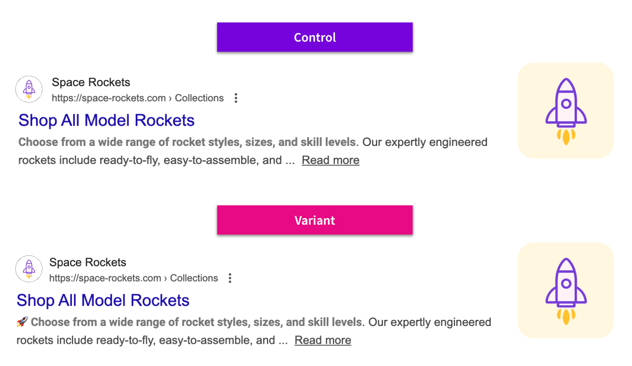

To test whether a subtle visual differentiation could influence user behaviour, a customer added a single, contextually relevant emoji into a controlled set of meta descriptions, leaving all other elements unchanged, across multiple markets and page types.

We aimed to test whether the addition of a small visual cue could make listings more noticeable in the SERPs and ultimately drive organic traffic.

What Was Changed

We added an emoji at the start of meta descriptions on a subset of pages of different page types across three different domains.

Results

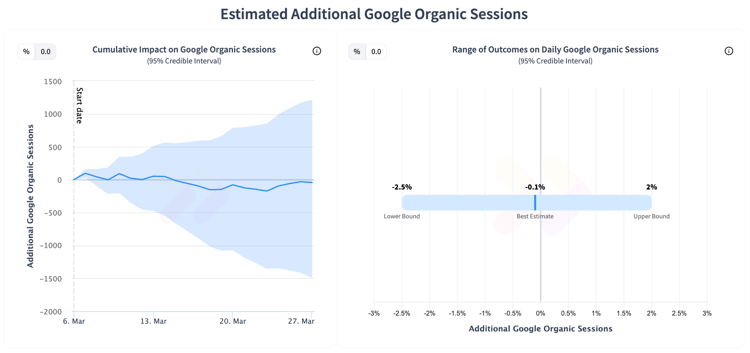

The results were inconclusive for organic google sessions across all tests at a 95% credible interval, with a moderate positive uplift on one domain at a lower credible interval. This outcome indicates that, although the change did not cause harm on any market, it also did not consistently lead to measurable improvements in organic performance either.

One possible reason for the overall inconclusive result is the subtlety of the test. Adding a single emoji to meta descriptions changes the visual presentation of listings but does not alter the underlying page content or relevance signals that search engines consider when ranking.

Unlike more substantial SEO changes, such as adding structured data, expanding content, or improving keyword targeting, this kind of minor formatting adjustment is unlikely to move the needle on rankings directly. We’ve also seen from meta data tests that adding clarity (specific keywords) tends to perform better across a variety of customer industries.

Although the results were mostly inconclusive, the positive uplift for organic google sessions on one domain indicates that context matters. Certain listings or search environments may respond better to visual cues, even minor ones like an emoji. The test underscores that not every tweak will produce consistent results, but experimentation across page types and markets can uncover where minor changes might influence user behaviour.

The neutral outcome overall reinforces an important lesson we see across many tests: minor adjustments like this can generally be made safely without harming performance, and targeted tests can reveal where subtle changes might drive meaningful impact. Continuous experimentation helps teams understand these nuances, reducing risk and informing optimization decisions. Sometimes the most valuable takeaway is simply knowing the effect of a change, whether positive, negative, or neutral.

To receive more insights from our testing, sign up for our case study mailing list, and please feel free to get in touch if you want to learn more about this test or our split testing platform more generally.

How our SEO split tests work

The most important thing to know is that our case studies are based on controlled experiments with control and variant pages:

- By detecting changes in performance of the variant pages compared to the control, we know that the measured effect was not caused by seasonality, sitewide changes, Google algorithm updates, competitor changes, or any other external impact.

- The statistical analysis compares the actual outcome to a forecast, and comes with a confidence interval so we know how certain we are the effect is real.

- We measure the impact on organic traffic in order to capture changes to rankings and/or changes to clickthrough rate (more here).

Read more about how SEO testing works or get a demo of the SearchPilot platform.